Originally published on the Empirica blog

Welcome to “Empirica Stories”, a series in which we highlight innovative research from the Empirica community, showcasing the possibilities of virtual lab experiments!

In our first interview, we highlight the creative work of Christopher To and collaborators on “Victorious and Hierarchical: Past Performance as a Determinant of Team Hierarchical Differentiation”, published in Organization Science. For this project, Christopher To, an incoming Assistant Professor at Rutgers SMLR, was joined by Tom Taiyi Yan (Assistant Professor at UCL School of Management), and Elad Sherf (Assistant Professor at the University of North Carolina at Chapel Hill Kenan-Flagler Business School).

Chris’ research explores the antecedents and consequences of competition and inequality. In some of Chris’ ongoing work, he asks questions such as “when does hierarchy and inequality reproduce in teams,” “how does inequality change our ethical standards,” and “how does inequality shape the meaning of work?”. Tom’s research examines competition and social network effects such as brokerage and structural holes, as well as gender disparity in the workplace. Elad’s research investigates managers’ resistance to feedback and input from others, and the structural and psychological barriers managers face in attempting to be fair to others at work.

Tell us about your experiment!

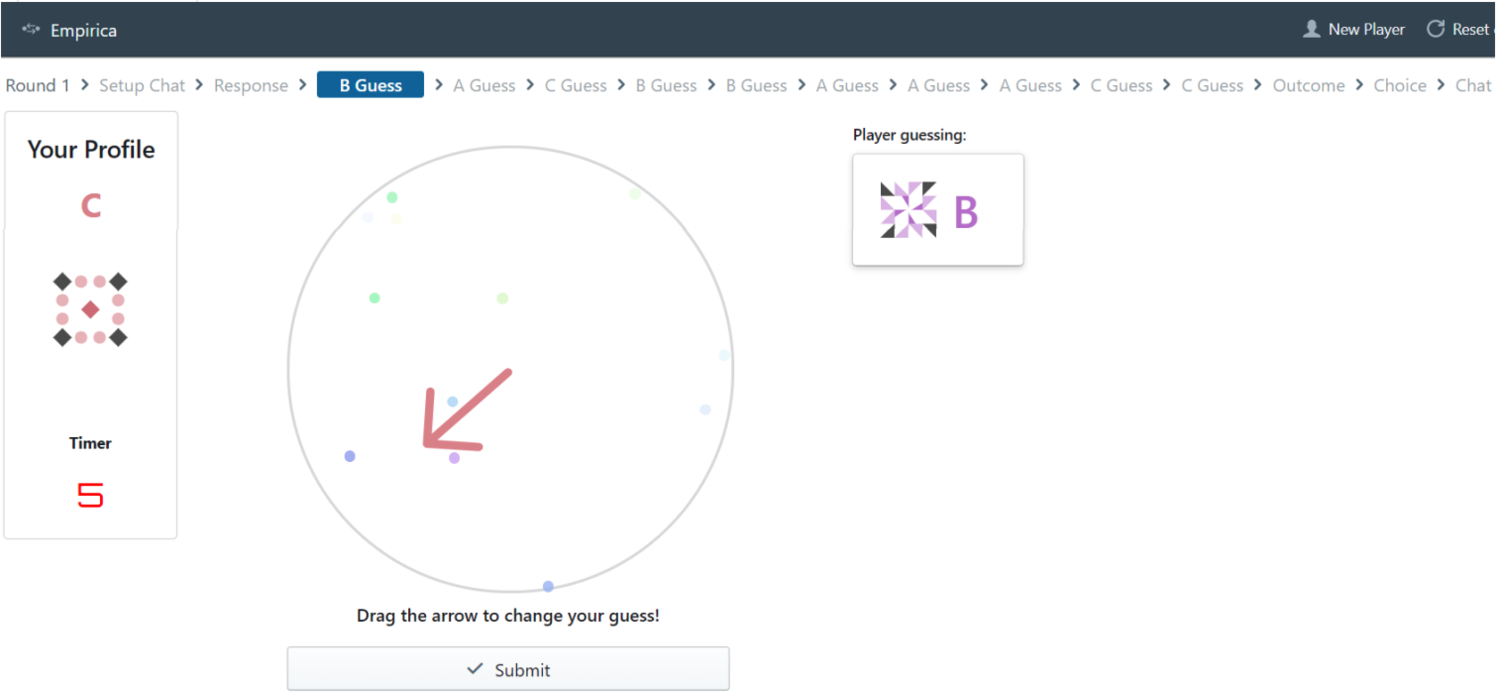

We recruited approximately 750 participants from an online panel and assigned them to teams of three. Teams completed a spatial judgment task (i.e., guessing the direction of moving dots), and earned points based on the accuracy of each team member’s guess. The “catch” was that teams only had 10 chances to guess, and they needed to allocate how many guesses each team member received. How would these distributions in guesses differ based on whether a team was told they were performing well or poorly (our experimental manipulation)?

What was helpful for us, was the game was fairly interactive. Participants could watch their teammates guess in real-time, and had to decide as a team (via real-time chat) how to allocate their guesses after receiving performance feedback – any decision one team member made would be immediately seen by the others. Relative to other online experiments in our field, this created a real sense that participants were part of a real live team.

Screenshot of the experiment interface: Participants were tasked with guessing the direction in which a collection of dots was moving on the screen, where half of the dots moved together in the same direction, and the remaining half moved in random directions.

What parts of Empirica’s functionality made implementing your experimental design particularly easy?

One neat thing about this study was that we did not need to manipulate network structures. In fact, we approached the platform as teams researchers, and used Empirica primarily to create online teams who can play a game in real-time. In a COVID world, Empirica provides a convenient solution to a huge logistical issue faced by teams researchers. It also opens opportunities to non-network researchers who want to study how teams interact in a more digital context.

The flexibility of the platform is incredible – any game/task/design you can think of, you can likely find a way to implement. The data it collects is also wonderful – essentially, any action made by the participants is recorded and can be analyzed. We found this helpful in the review process as some reviewers were curious about the content of chat logs and what/how teams were communicating. Luckily, Empirica captured this data and we were able to address the reviewer’s concerns.

How much effort did it take to get your experiment up and running? Did you develop it or outsource?

We outsourced a large portion of the experiment to a freelancer. It took a small learning curve for the programmer to learn the system, but it was relatively short, and programmers who know Javascript are in high supply. It was quite easy to work with the freelancer – for the front-end, we just told him what we wanted, provided some sample screenshots, and he made it work; on the back-end we told him what actions should be recorded, and what we wanted the final output CSV/Excel file to look like. He took care of the rest.

For the smaller back-end details (e.g., changing questionnaire wordings), the lead author had a minor but sufficient programming background to make changes on the fly. Programming experience is extremely helpful, but perhaps not necessary.

Are there any interesting workarounds you came up with when Empirica didn’t do quite exactly what you needed?

Not that we can think of. Perhaps the main challenge was participant recruitment. We recruited respondents from Amazon Mechanical Turk, and they would sometimes populate a room (by clicking the game link) and then walk away from the computer. As a result, sometimes, virtual teams/rooms would be created, but games could not progress because a participant was not present at their computer.

To address this, we scheduled times where respondents had to proactively join (e.g., games took place at 9AM local time). This ensured that the respondents had to actively set aside time to opt into a game during its scheduled time (rather than passively joining or clicking the link while browsing).

What value do you think virtual lab experiments can add to your field of research?

Empirica helped us (as team researchers) in three ways. First, it provided us with a scalable and convenient context to study teams. Naturally, bringing people into a laboratory context during COVID is difficult. Even if you are able to bring people into the lab, data collection is usually slow and cumbersome. With Empirica, the data collection was faster and easily scalable. Second, new methods can introduce new questions. Empirica can introduce factors such as multiple rounds, member turnover, and repeated interactions, which may be more difficult to explore in traditional laboratory contexts. Third, because it is web-based, Empirica provides flexibility in how complex/simple you want the task to be. If you can design a game in a web-browser, you can design it in Empirica.

—

Learn more about Empirica by visiting the Empirica website or our Group Dynamics project page.

AUTHORS

ABDULLAH ALMAATOUQ

—

—

Affiliated researcher

Massachusetts Institute of Technology